Artificial Emotion: A Worst-Use Case for AI

Somewhere between the continental breakfast and the cocktail hour, a roomful of attorneys learned that AI can now feel our feelings for us. At the recent estate planing conference I attended, some of the ideas for using AI were wise — if perhaps obvious to anyone who has been in the workplace for the last twelve months: It’ll take notes for you! Create your PowerPoints for you! Do research for you! These suggestions came with the similarly obvious warnings: it will sometimes make things up (oops) plagiarize (shucks) or expose your private information (darn it).

But what made me genuinely uncomfortable was a different suggestion entirely: that professionals should use AI to help them with what many lawyers apparently find the most challenging part of their job — empathizing with their clients.

You can, the presenter asserted, use AI to draft those more touchy-feely communications. Client’s husband just died and you don’t know what to say? Ask Claude to write a soothing email. Client’s daughter just celebrated her First Communion and you don’t know what Communion is? Ask ChatGPT to draft an appropriate card. According to this presenter, writing legal briefs and court filings is the easy part of his job. The softer skills — the ones where you actually connect with the people who are paying you — have always been a challenge. But now AI writes his emails for him, finding the appropriate emotional cadence and cultural associations to help him relate to his clients. All without requiring him to confront his own, clearly unaddressed, difficulties with human emotion.

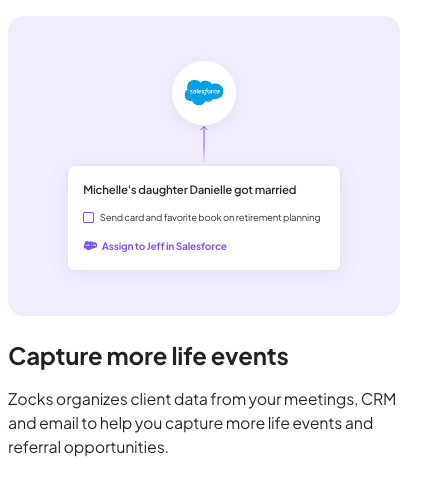

This presentation reminded me of a nearly identical one at the recent Financial Planning Association conference, where the presenter, the founder of Zocks, “the AI Assistant for Financial Advisors,” which can help you “automate your meeting notes, intake forms, client emails, and more,” told a roomful of financial advisors that AI isn’t replacing us humans — it’s freeing us up to focus more on the personal side of things, rather than wasting time on bureaucratic nonsense. Great. That sounds good.

But then he showed us how he now records all client meetings, feeds the transcripts into AI, and generates summaries, PowerPoints, and even podcasts for clients to review afterward. “Check this out!” he said, moving his pointer to a slide featuring two football mascots. “AI even picked up on the clients’ mention of their favorite teams and added it to the presentation material!”

So the promise was that AI would handle the impersonal, mechanical tasks so that advisors could invest more time and attention in the human relationship. And what we got was AI handling the human relationship so that advisors could... do what, exactly?

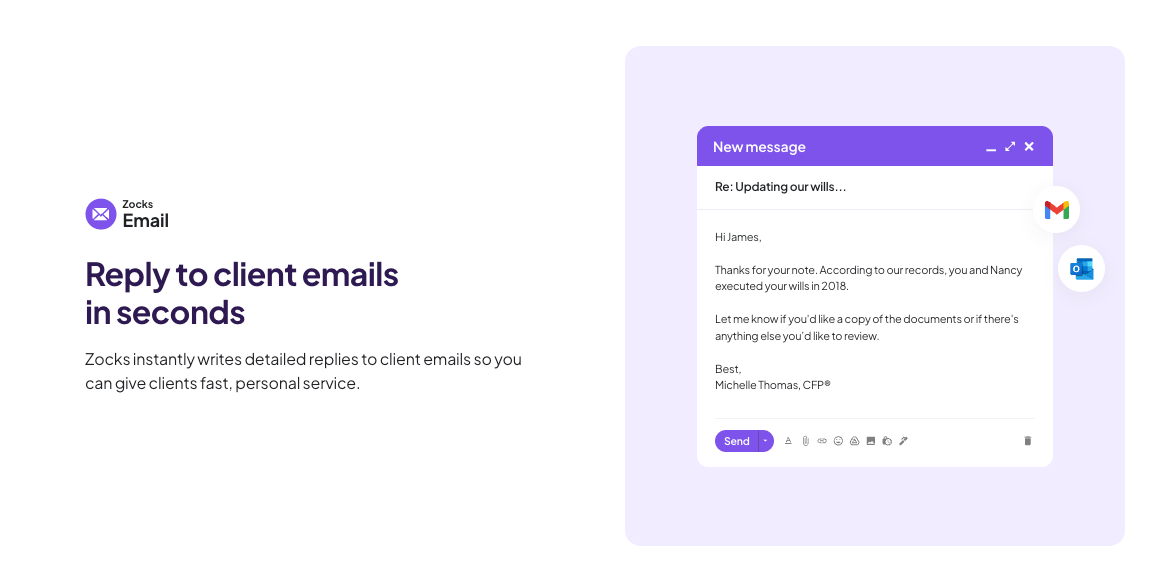

This presenter, too, was enthusiastic about AI-assisted email: tools that read your inbox, research responses, and draft replies on your behalf. When someone in the audience asked whether the clients actually want AI doing all of this, he cited his own company’s research showing that high-net-worth individuals expect their advisors to be using AI — and would be disappointed to find out they weren’t.

Sure. Not using AI at this point would be like refusing to use a calculator to do algebra. I’m not a romantic about analog processes. But is using AI to organize spreadsheets really the same as using AI to write your condolence note? Is that what clients are imagining when they say they expect their advisors to use AI — that the first draft of your sympathy email came from a machine? That the football reference in the PowerPoint wasn’t a sign that you were listening, but rather that the software was?

The whole pitch at both conferences rested on a contradiction the didn’t seem to concern the presenters: AI will free us to be more human, but AI is great because it can also do all the human-stuff, too. Personally, while I accept Artificial Intelligence, I am deeply uncomfortable with Artificial Emotion.

Which brings me to a question I’ll leave with you: If your attorney’s condolence note was written by Claude, do you have the right to know that? If the football reference in your advisor’s recap deck was added by an algorithm that parsed a transcript, is that the same as your advisor remembering? Does it matter? I’d love to hear your thoughts.